Picture this: it’s 2:07 a.m. A “production-ready” AI agent that was green-lit after weeks of demos and spotless evaluation scores has just triggered a real customer impact. Not because the model failed, but because reality did. Incorrect tool used. Permissions changed. User behavior drifted. Another agent behaved unexpectedly.

This is not a rare edge case.

It is the defining challenge of the agentic era.

As enterprises move from copilots to autonomous agents, a quiet realization is setting in: traditional AI-readiness signals are no longer reliable. Passing evaluations, green dashboards and clean demos do not guarantee realworld behavior. They only prove that the system behaved as expected in controlled conditions.

Reality, unfortunately, does not work that way.

The Underestimated Shift from Software to Systems

For decades, enterprise software followed a predictable pattern: deterministic logic, clear inputs or known failure modes. If something broke, it could usually be traced back to a line of code or a configuration change.

AI agents break this mental model.

An agent is not just software; it’s a system that reasons, plans, adapts, calls tools, interacts with other agents and operates in environments that change faster than specifications can be written. Its behavior is shaped not just by code, but by prompts, policies, context, memory and interaction dynamics.

This is why teams feel a growing discomfort as they deploy agent at scale. This is not a question of “Does it work?”.

The question is, “What happens when it doesn’t—and will we see it coming?”

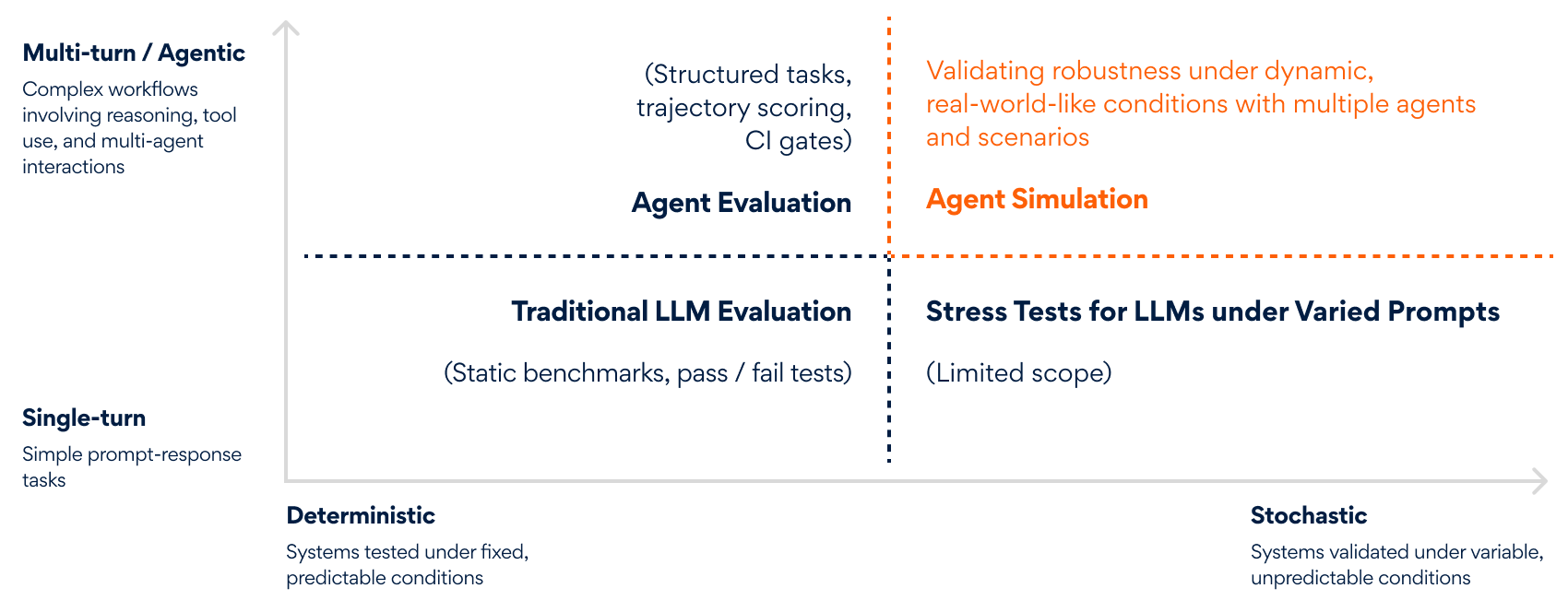

Why Evaluation Alone Creates False Sense of Safety

Most organizations already “test” their agents. They run AI agent evaluations against curated datasets. They measure accuracy, compliance and task completion. All necessary practices, but dangerously incomplete.

Evaluation is fundamentally confirmatory.

It checks known capabilities against predefined expectations. It assumes the world is stable, prompts are controlled and interactions are linear.

Agents don’t live in that world. They live in environments where:

- Users behave unpredictably

- Tools time out or return partial data

- Agents call incorrect tools

- Policies conflict under pressure

- Multiple agents interact in nonlinear ways

- Small deviations cascade into large outcomes

In these conditions, the most important behaviors are not correctness, but adaptability, resilience and judgment under uncertainty. Static evaluation cannot account for these qualities. This is why teams end up with agents that look perfect on paper but go fragile in production.

Hard Lessons, Learnt from Other Industries

Autonomous vehicles are not validated by driving the same sunny road repeatedly. Financial trading systems are not trusted because they passed a benchmark. Manufacturing plants do not rely on live experimentation to discover failure modes. Telecom networks are not hardened through bestcase scenarios.

All of these domains rely on simulation.

They deliberately create stressful, chaotic, adversarial conditions, not because they expect them to happen every day, but because when they do, the cost of being unprepared is enormous.

Simulation is how complex systems earn trust before they earn scale.

AI agents now sit squarely in this category of systems. Yet organizations continue to validate them as if they were static software components.

The Real Readiness Question to Ask

The most important question for agentic systems is no longer:

“Does the agent complete the task?”

It is: “How does the agent behave when the task becomes ambiguous, the environment shifts and the system is under stress?”

This reframes readiness beyond a checkbox.

Readiness is not a release gate, it is behavioral confidence built through exposure to complexity before customers experience it.

When teams start thinking this way, a different picture of preproduction emerges. It focuses less on validation and more on rehearsal and simulation. A space where agents can fail safely, repeatedly and informatively.

Moving from Static Confidence to Earned Trust

There’s a deeper implication here that goes beyond engineering.

As agents take on more autonomous responsibility by handling decisions, workflows and customer interactions, the tolerance for surprise shrinks. Trust becomes the currency of adoption and trust cannot be asserted. It has to be earned.

Earned trust comes from understanding not just what a system does when things go right, but how it degrades when things go wrong. It comes from visibility into decision paths, from knowing where judgment holds and where it breaks and from learning faster than reality can punish you.

In that sense, the future of agentic AI is not just about smarter models or better tools. It is about institutionalizing humility by accepting that we cannot predict every outcome and designing systems that learn before they fail publicly.

The Road Ahead

From assistants to collaborators to autonomous actors, Agentic AI is moving fast. With that shift comes a responsibility to rethink how we define “ready.” The organizations that succeed will not be the ones with the flashiest demos, but the ones that take complexity seriously enough to confront it early.

To see how Persistent is tackling this challenge, take a look at Simul(AI)te, our AI agent simulator that surfaces risks, behaviors and edge cases before production.

Author’s Profile

Arun Kishorre Sannasi

Senior Consulting Expert, Persistent Systems

Aashis Tiwari

Senior Data Scientist – AI Labs, Persistent Systems