At ServiceNow Knowledge 2026, the conversation clearly shifted from optimization to orchestration, from workflows to agents and from systems of record to systems of action. As a ServiceNow Global Systems Integrator, we see this transition not as a momentary trend, but as a structural inflection point in how enterprises design, govern and scale AI-led work.

The reflections below capture an unfiltered, first hand perspective from the conference floor, emphasizing what stood out, what feels different this time and where enterprises should tread carefully.

For organizations navigating agentic AI adoption, these insights reinforce a core belief we hold at Persistent: success will depend not just on tools, but on architecture, governance and the right partner to bring it all together.

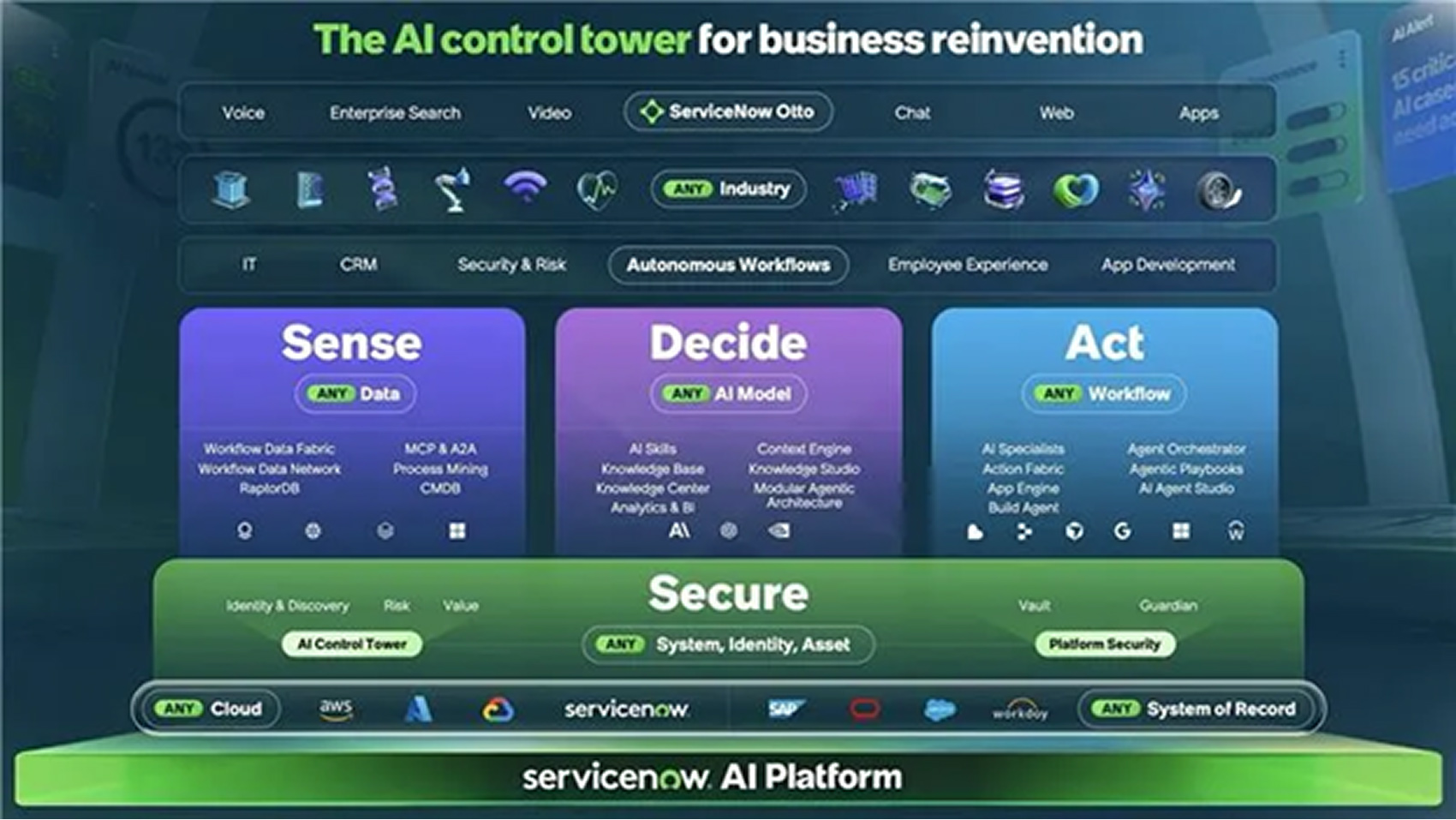

The Sense–Decide–Act Moment

The single slide that dominated the event captured ServiceNow’s vision succinctly: a Sense–Decide–Act architecture for agentic AI. It’s a simple framing, but an important one that acknowledges something many AI platforms gloss over: real enterprise AI is as much about control and intent as it is about intelligence.

Sense begins with Workflow Data Fabric, the layer that connects diverse data sources and extracts context at scale. The newly introduced AI Agent Advisor builds on this by identifying opportunities and recommending agents to automate specific workflows.

Decide is where ServiceNow differentiates meaningfully. Using context engines, AI models, CMDB data, historical resolutions and corporate policies, this stage focuses on planning before acting. It ensures decisions are verified against enterprise guardrails—a pattern that is surprisingly often skipped in agent implementations.

Act is the execution layer. With planning already complete, actions are carried out using deterministic workflows and agentic playbooks via Action Fabric. The emphasis here is not creativity, but reliability, and I like that ServiceNow has resisted mixing planning and action into one opaque loop.

Operating System for Enterprise AI Agents

One of the defining moments of the conference was when Jensen Huang, Founder and CEO of NVIDIA, joined Bill McDermott, Chairman and CEO of ServiceNow, on stage and called ServiceNow the “Operating System for Enterprise AI Agents.” That statement landed, not because it was flashy, but because the platform vision supports it.

ServiceNow brings years of experience in managing complex business workflows, approvals and decision logic. More importantly, it holds deep enterprise context across data that, when used responsibly, explains the why behind past decisions, not just the what. That institutional memory is an asset many AI-native platforms simply do not have.

Enterprise Control Tower Dilemma

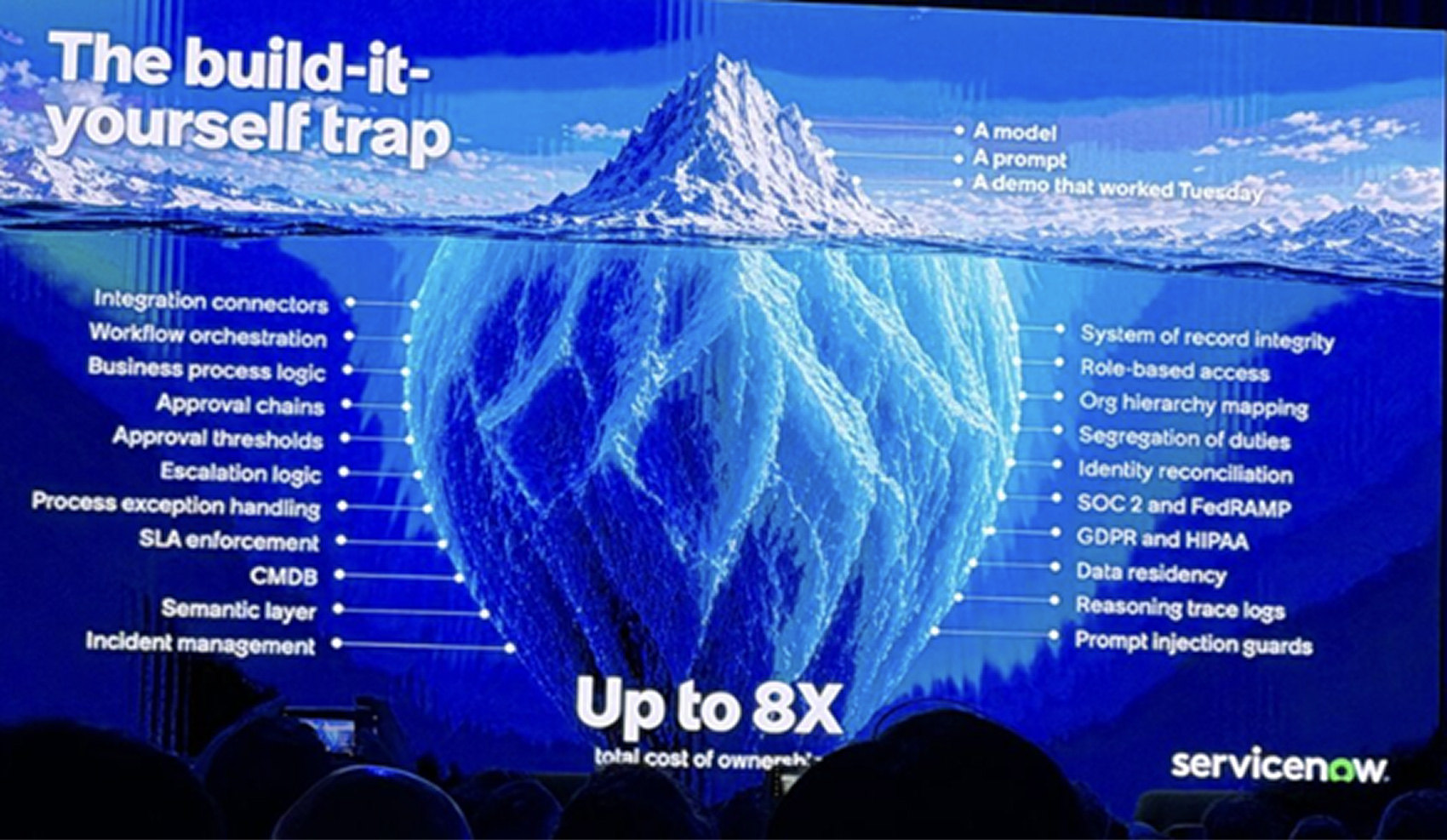

That said, the road ahead isn’t frictionless. Every major platform player now wants to be the agentic control plane or the central nervous system of the enterprise. For CIOs and CISOs, that creates a very real dilemma: who do you white list to run your agents, access your systems and enforce your guardrails?

Some large enterprises are attempting to build their own AI control towers to retain control. While understandable, this often leads to what ServiceNow aptly called the “build it yourself trap”, where integration logic, security enforcement, compliance, identity reconciliation, audit trails and governance pile up beneath the surface. This results in significantly higher total cost of ownership and growing technical debt if not done right.

Where Persistent Fits In

One aspect that repeatedly came up in conversations at Knowledge, but often between the lines, was responsibility. As AI agents move closer to the execution layer of enterprise operations, the stakes change. These systems are no longer just recommending actions; they are beginning to take them.

That shift makes responsible AI not a compliance checkbox, but a design principle.

In practice, this means making intent explicit, decisions explainable and actions auditable. It means embedding policy awareness, human in the loop controls and observability into agent lifecycles from day one. Not retrofitting them after automation is already in motion. It also means recognizing that governance is as much an organizational construct as it is a technical one.

This is an area where platform capability alone is insufficient. Enterprises need partners who understand how responsible AI intersects with real operating models—security, risk, compliance, data ownership, and accountability. At Persistent, we view responsible AI as inseparable from scale. If agents cannot be trusted, they cannot be expanded. And if they cannot be governed, they cannot be defended.

ServiceNow’s emphasis on guardrails, policy driven decisioning and deterministic execution creates the right foundation. The responsibility then shifts to how these capabilities are contextualized, implemented and sustained within each enterprise environment. That’s where Responsible AI stops being theoretical and starts becoming operational.

This is where partnerships matter. Adopting an AI control tower, whether ServiceNow’s or any other, requires deep domain knowledge, platform expertise and responsible AI practices baked in from day one.

At Persistent, our ServiceNow practice is focused on helping enterprises move beyond experimentation to measurable outcomes by customizing the platform to enterprise context, integrating it securely into existing landscapes and ensuring governance is not an afterthought. The goal isn’t to hand over the keys blindly, but to move faster with confidence.

Final Thought

Which platform ultimately dominates the enterprise agent ecosystem will become clear over time. What is already clear, echoing Jensen Huang’s comment on stage, is that agentic AI today isn’t eliminating work; it’s enabling people to pursue more ideas, more initiatives and more value simultaneously. In that sense, enterprises may feel busier than ever—but also more empowered.

And that, perhaps, is the most honest takeaway from Knowledge 2026.

Author Profile

Dattaraj Rao

Chief Data Scientist, AI Research Lab