Introduction

AI-powered coding tools are rapidly transforming software development, not just by suggesting snippets but by orchestrating end-to-end agentic development workflows that plan, generate and refactor code across entire services. These workflows promise accelerated delivery, reduced repetitive boilerplate and more time for teams to focus on core business challenges.

As agentic assistants such as GitHub Copilot, Claude Code and Cursor AI begin to participate in design discussions, trigger automated tests and open pull requests, maintaining high code quality remains critical to ensure stability, security and long-term maintainability in today’s fast-paced, AI-driven workflows.

Why code quality still matters

- Hidden risks in AI-generated code: AI-generated code may appear correct and pass initial checks but can introduce redundancy, inefficient algorithms or security vulnerabilities if left unreviewed. These issues may not be evident until the code is deployed or scaled and can lead to system instability or compliance failures.

- Context and standards: AI tools operate on statistical patterns rather than a deep understanding of project-specific requirements or organizational standards. High-quality code ensures clarity, reliability and reusability, allowing teams to work collaboratively and manage growing codebases effectively.

- Human oversight is essential: The final gatekeeper for quality is still rigorous review and validation by experienced developers. Code reviews remain critical for catching last-mile issues, such as race conditions, resource leaks, security gaps and architectural misalignments, that automated agents cannot fully anticipate.

- Long-term maintainability: As AI accelerates the rate of code generation, poorly reviewed code will accumulate technical debt, making updates and scalability more difficult over time. High code quality assurance is paramount to maintaining a stable, secure and efficient product.

Adopt Automated Quality Gate Tools

Adopting automated quality gate tools is crucial for maintaining high development standards as AI-powered, agentic workflows accelerate software delivery and shift more coding activity to autonomous assistants.

In an enterprise context, these quality gates act as governance guardrails that enforce objective measures of correctness, security and maintainability by integrating static analysis, code review and policy checks directly into the development workflow from the earliest stages.

This approach enables teams to catch defects, security vulnerabilities and maintainability issues early, promotes consistency across the codebase and provides fast feedback to developers and AI agents alike. It reduces costly post-release fixes and helps ensure that what ships fast to build, robust, compliant and easy to maintain over time.

Key capabilities to look for

- Static analysis: Analyze source code without executing it, using language-specific rules and data flow analysis to identify defects, risky patterns and maintainability issues early.

- Code smells and complexity: Flag high complexity, duplication and long methods that increase technical debt and hinder long-term maintenance.

- Vulnerability detection: Use SAST-style rules and taint analysis to surface injection risks, insecure deserialization, weak cryptography, secrets in code and other security hotspots.

- Coverage awareness: Combine test coverage signals with quality gates to ensure new or changed code remains adequately tested.

- Policy-driven gates: Enforce thresholds for vulnerabilities, reliability, maintainability and duplication to prevent risky changes from merging.

Pair Quality Checks with AI Agents

Expose code-quality services to AI agents through a standardized agent tooling protocol, such as Model Context Protocol (MCP). This allows agents to query quality metrics, issues, hotspots and gate status during code generation and review, blending probabilistic generation with deterministic checks.

What this unlocks

- Issues and metrics on demand: Agents can fetch bugs, code smells, severities, duplications, complexity and coverage metrics to refine suggestions.

- Security hints at generation time: SAST-style findings and hotspot workflows help steer agents away from insecure patterns.

- Quality gates as guardrails: Pass or fail signals on defined thresholds help humans in the loop monitor agent output and take corrective action.

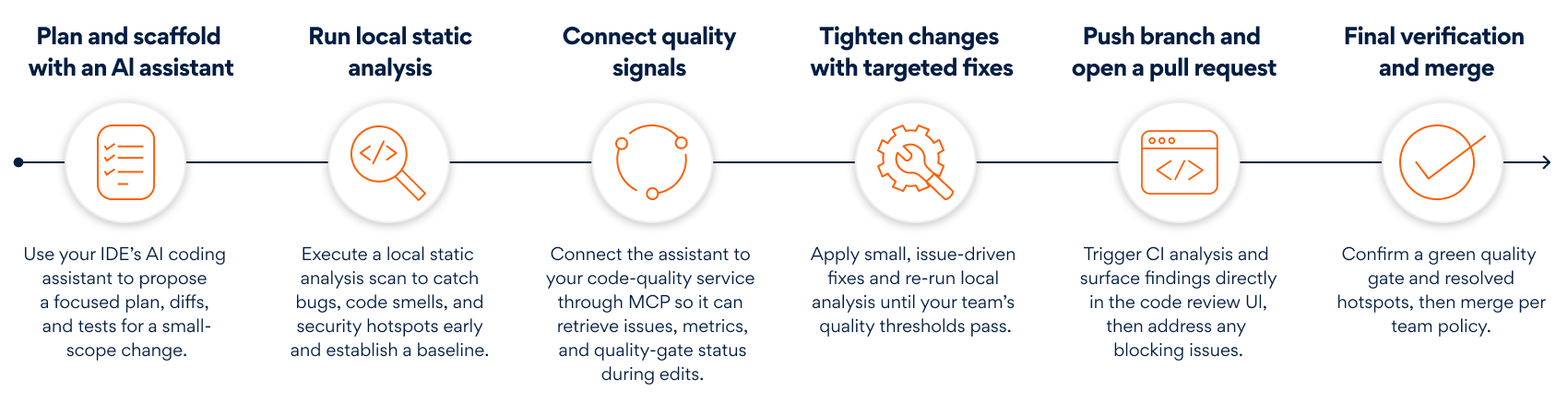

Development Workflow with AI Agents and Quality Gates

Here is a practical, end-to-end developer workflow that uses an IDE with an agentic coding tool, a code-quality service and an MCP server that exposes the code-quality service to the agent.

Fig 1. Developer workflow with an agentic coding tool and code quality gates (Source: Persistent)

Closing Thoughts

AI-powered coding tools and agentic workflows promise significant gains in speed and scale, but delivering software that is safe, maintainable and reliable still requires deliberate governance and a consistent focus on quality.

By pairing agentic assistants with policy-driven quality gates and regular code reviews, teams can keep pace with faster development cycles while ensuring what ships is robust, compliant and trusted. Focusing early on standards, automated checks and maintainability helps reduce technical debt, prevent costly errors and keep the codebase adaptable as AI capabilities continue to evolve.

Ultimately, AI-accelerated engineering works best when autonomous agents operate within clear guardrails and human engineers remain accountable for decisions, architecture and long-term outcomes, combining the efficiency of AI with human judgment and rigorous engineering discipline.

Author’s Profile

Shannon Vaz

Lead Software Engineer, Corporate CTO Organization BU, Persistent Systems