Agility in today’s business world is at a premium. The agile delivery model, which brings with it continuous, speedy deployments and a greater ability to monitor apps, is where the world is collectively moving. This shift has spurred the trend of enterprises leveraging container technologies to execute the agile delivery model right.

It is no surprise that the adoption of containers is at an all-time high. But what is surprising is the abundance of misinformation that is doing the rounds around the topic of containers. Through this article, I plan on de-mystifying containers and fortifying the argument for container adoption.

Containers: A definition

A ‘container’ is a standardized unit of software that packages code and all its dependencies, so the application runs quickly and reliably from one computing environment to another.

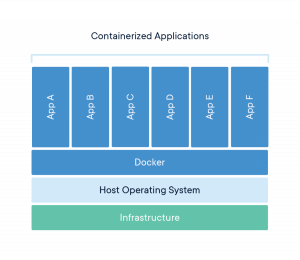

Docker is a popular container platform that provides the choice, agility, and security needed to usher in a new era of application computing. A Docker container image is a lightweight, standalone, executable package of software that includes everything needed to run an application: code, runtime, system tools, system libraries, and settings.

However, if we drill down to the basics, containers are nothing but isolated groups of processes that run on a single host and fulfill a set of common features. Some of these features are built directly into the Linux kernel.

Containers: An overview

Container technology was first launched through Docker in 2013 as an open Source docker engine. This paved the way for several other tools and platforms built around container technology. Some of the prominent ones are,

- Kubernetes, Swarm. (Open Source cluster management)

- Red Hat atomic, VMware Photon, RancherOS, Ubuntu Core. (Linux distribution OS with capability for container optimized builds)

- OpenShift, Rancher Labs, Docker, Pivotal. (Enterprise container platform software suites)

Most people tend to undermine containers as being cheap virtual machines. This is not just technically untrue, but it also leads to enterprises adopting faulty container adoption strategies. It is therefore extremely important to understand the true worth of using containers in the agile delivery model.

Now that we are clear about what a container is and the tools and platforms that it has engendered, we can move on to understanding why they are so beneficial in the agile delivery model.

Containers: Top benefits

1) Platform Independence

Portability is a major benefit of using containers since it can wrap up an application with everything it needs to run, like configuration files and dependencies. This enables users to easily and reliably run applications on different environments such as their local desktops, physical or virtual servers, testing, staging or production environments, and public or private clouds.

2) Resource efficiency and density

Since containers do not require a separate operating system, they use up fewer resources. A container usually measures only a few dozen megabytes, making it possible to run many more containers than VMs on a single server. As they have a higher utilization level vis-a-vis the underlying hardware, one requires less hardware, resulting in a reduction of bare metal costs as well as datacentre costs.

3) Effective isolation and resource sharing

Although containers run on the same server and use the same resources, they do not interact with each other. If one application crashes, other containers with the same application will keep running flawlessly and won’t experience any technical problems. This isolation also serves to decrease security risks. For instance, if one application should be hacked or breached by malware, any resulting negative effects won’t spread to the other running containers.

4) Speed: Start, create, replicate or destroy containers in seconds

Containers are lightweight and start in less than a second since they do not require an operating system boot. Creating, replicating or destroying containers is also just a matter of seconds, thus greatly speeding up the development process, the time to market and the operational speed.

5) Immense and smooth scaling

A major benefit of containers is that they offer the possibility of horizontal scaling, which means that one can add more identical containers within a cluster to rapidly scale-out. With smart scaling, where you only run the containers needed in real-time, you can reduce your resource costs drastically and accelerate your return on investment. Container technology and horizontal scaling has been used by major vendors like Google, Twitter, and Netflix for years now.

6) Operational simplicity

Contrary to traditional virtualization, where each VM has its own OS, containers execute application processes in isolation from the underlying host OS. This means that one’s host OS doesn’t need specific software to run applications, which makes it simpler to manage host systems and quickly apply updates and security patches.

7) Improved developer productivity and development pipeline

A container-based infrastructure offers many advantages, thus promoting an effective development pipeline.

Each of these benefits undoubtedly points towards being able to help enterprises in their bid to become more efficient and agile in their processes. In a business environment that clearly spells go agile or go bust, understanding and adopting containers becomes that much more vital. In the second part of this two-part blog post, we will focus on the best practices for containerization, aka, considerations and guidelines for successful migration of applications to containers.