GIL is a Global Interpreter Lock.

In this article, we will talk about the history of GIL.

What are the problems that exist out there and how does GIL solve them?

How do they still affect us today and how might they change in the future?

Back in1992, Python was pretty new in the tech industry and engineers started talking about a fancy thing called threads.

Threads are lightweight operations that running separately but share some state or global variables with each other.

At the time, not all operating systems had the support of threads and the computers did not have multiple CPUs; they only had one. However, soon there were talks about the launch of multi-core computers, making way for multi-threaded environments. So people started using threads and thus, Python with threads came into existence.

Understanding the Problem in Python

Python has a bunch of global variables that are equivalent to the

C module, so if you have multiple threads and they try to change a single module attribute simultaneously, something terrible can happen.

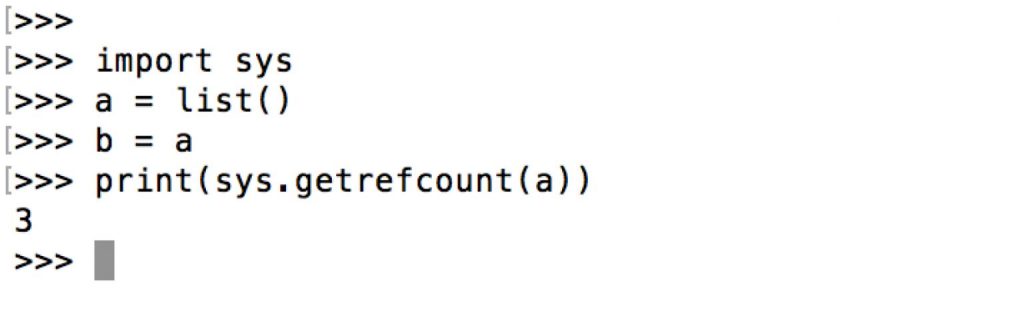

To understand this, we need to understand first the reference counting which CPython uses. In simple language, reference counting is the number of people interested in this object. Python manages the reference count by incrementing & decrementing the counts. When the reference count of a particular object reaches zero, it releases the memory bound to that object.

In the shown example, the reference count is three because sys.getrefcount() was examining the object so it will also count as one. After the completion, it will drop this reference.

These things used to work absolutely fine when there were no threads.

Now, let’s take a look at the problem with threads.

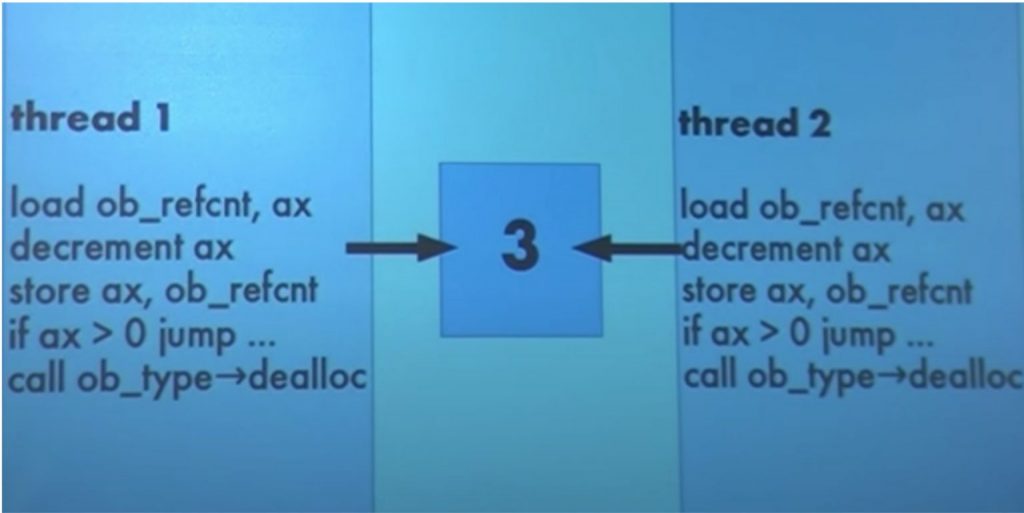

There are two threads and the object has a reference count of three. The threads load the reference count value into the ax variable then they decrement the value of reference count, after which they store its value back into the variable.

In case both threads start running parallelly and decrement the reference count value at the same time then its resultant value will be two. But the real value should be one because the two processes have decremented the value by one each. This is a bug because its current reference is two which is greater than the original value by one so this object will live forever. This is never going to reach zero. Its memory will never be free; This is called Leaked memory.

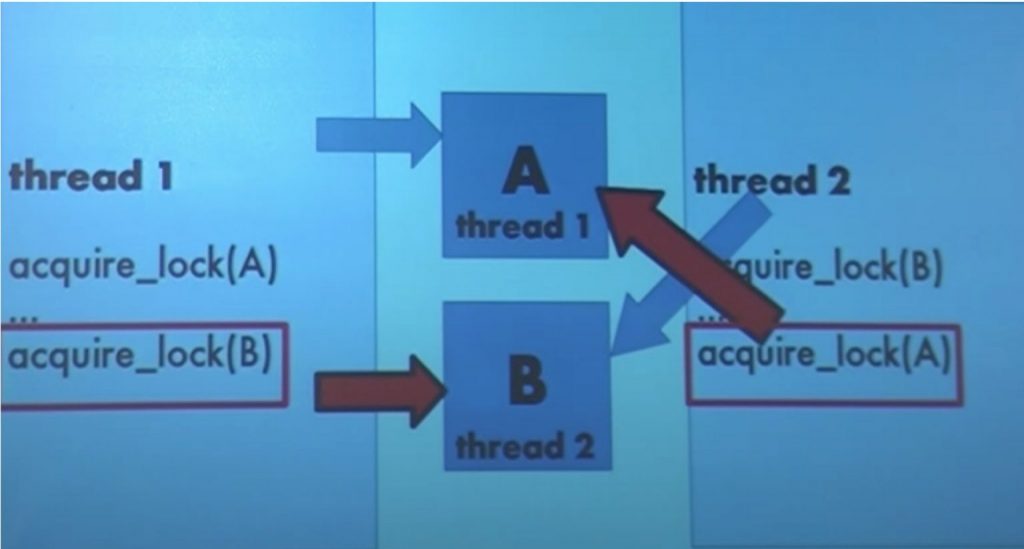

For resolving the bug, Python introduces a Lock. The concept is really simple. It is an object that allows only one person to hold it at a time and there is a rule that any process wants to talk then it should be holding a lock. When one thread releases the lock, only then the second thread can hold the lock and start interacting with the process. This solution introduces a new bug which is referred to as Deadlock.

Two threads are running parallelly. Thread1 acquires the lock(A) and thread2 acquires the lock(B). Now the program moves forward, so in the next line thread1 wants to acquire the lock(B) and thread2 wants to acquire the lock(A) which neither of them can because the locks are not released yet. This situation is Deadlock.

Global Interpreter Lock (GIL)

Python introduces this for solving problems with threads.

The rule is simple: there is one lock (GIL) and you have to hold it to interact with the CPython interpreter in any way, whether you want to run byte code, allocate memory, or perform any C API call.

Ramification of the GIL

Mostly, there are two types of programs – I/O bound & CPU bound.

I/O programs spend most of their time waiting for input/output operations to happen. For instance, it’s waiting for writing/reading to a socket, writing/reading to a file, or talking to the screen, etc. Under the GIL, the I/O bound multi-threaded programs works really well. This is because, for these operations, you do need to hold the GIL so you can drop it and it can be used by other threads. Python and C libraries are very effective in dropping the GIL when they don’t need it.

CPU Bound programs are used for computing something. To perform computing you have to hold the GIL. So in a multi-threaded environment, only one thread will be able to grab a GIL at a time so your program effectively becomes single-threaded. This is the point of the GIL which everybody talks about.

Research on GIL

A Python enthusiast, Dave Beazley did some research in 2009 and published the results showing that CPU-bound multi-threaded code could run slower than a single-threaded code. It could get slower if you have multiple cores.

There is sys.getcheckinterval() in Python. It is a number that defines how finely you are supposed to swap off between threads if they are running Python byte code. The concept is that each and every process should give another process a chance to take a hold of GIL so that all the processes get a fair amount of time for holding GIL.

So checking this value tells you about the number of byte code instructions that are supposed to run before you have to stop and give somebody else a chance to run.

In Python2.7, this number is set to 100.

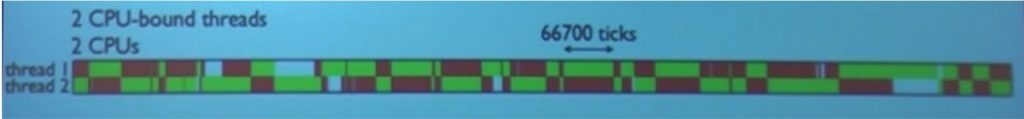

Explanation1: This diagram shows us that the number is not 100. There are two threads in the diagram; the red means the process wants to run but it can’t because somebody else has the GIL and the green means the process is holding the GIL and running right now. The X dimension is, of course, the time.

You can see in the diagram that one check interval is 66700 ticks, which means it ran 667 times longer than it should be allowed to run. So, something terrible is going on here.

Explanation2: Let’s talk about more terrible things that happen when you have 1 thread of I/O bound and another for CPU bound. You can see that the CPU-bound thread is not providing the GIL to I/O bound thread in the diagram.

Why are these scenarios happening?

The reason is that, after the CPU-bound process releases the GIL, it tries to acquire the GIL within nanoseconds along with other processes that are also trying to acquire the GIL. However, the same CPU process has been winning the race each time. So the result is that other processes did not get a chance to acquire the GIL. So, this design of GIL implementation was not effective.

Solution: The fix for this came in Python3.2; they added a new static variable named as int gil_drop_request = 0;

This is a flag that tells that somebody else is waiting. The next time you drop and reacquire the GIL, it makes sure that the other process gets to run before you get to reacquire the GIL. With this fix, most of the bad behavior went away.

Conclusion

In a multi-threaded environment, only one thread will be able to grab a GIL, so your program effectively becomes single-threaded. Running multi-threaded programs for CPU-bound processes in multi-core computers with Python is still problematic.

There were few more experiments performed by Python enthusiasts :

1. Free-threading patch: Python 4x-7x slower

2. Atomic incr/decr: Python 30% slower

But none of them was able to remove the GIL and provide at least equivalent performance.

There are various other Python interpreters available which use garbage collection mechanism instead of GIL and reference counting, which can be used for getting rid of GIL:

- CPython: Usage GIL & reference Counting, C APIs

- Jython: Usage Garbage collection, No GIL, No C APIs

- IronPython: Usage Garbage collection, No GIL, No C APIs

- PyPy: Usage Garbage collection, No GIL, No C APIs

Garbage collection is another approach for managing the lifetime of the objects very quickly, like stopping all the computations and examining all the objects in your memory. All the objects which are not connected to your program right now can say that are not used anymore and can collect it. Implementation of GC requires changes in the C extension and everybody knows how important C APIs are in regards to Python popularity.

There is no experiment performed for comparing the garbage collection and reference counting performance, but most people say that GC has approximately equal performance to RC.

But, if want to use the C extension then we have to use reference counting, GIL, and CPython interpreter. So the only solution we have left for supporting multi-threading in CPU-bound processes is Atomic incr/decr.

There are various attempts done for the removal of GIL; one of the famous projects is known as The Gilectomy. I might write another story about it and explain Atomic incr/decr in depth as well.